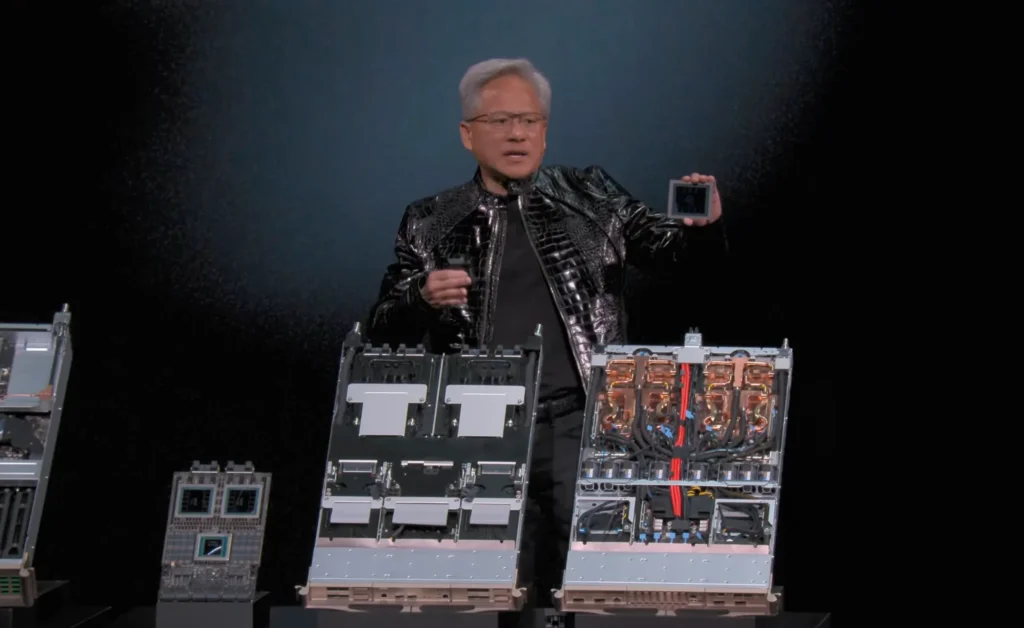

At CES 2026, Nvidia CEO Jensen Huang announced the Rubin platform, marking a major leap in AI computing.

Named after astronomer Vera Rubin, this next-generation architecture succeeds Blackwell and introduces six co-designed chips to power massive AI supercomputers, with production underway and availability starting in the second half of 2026.

The Rubin Platform Announcement

Nvidia kicked off CES 2026 with the launch of the Rubin platform, its first extreme co-designed system featuring six specialized chips that function as a unified AI supercomputer. Huang emphasized the platform’s role in addressing skyrocketing AI demands, enabling up to 10x lower inference token costs and 4x fewer GPUs for training mixture-of-experts models compared to Blackwell.

The platform is now in full production, with partners like AWS, Google Cloud, Microsoft, Oracle, CoreWeave, and others set to deploy Rubin-based systems in the latter half of 2026. This early reveal reinforces Nvidia’s annual cadence of AI advancements.

Key Components of the Rubin Architecture

The Rubin platform integrates six innovative chips for seamless rack-scale performance.

Vera CPU and Rubin GPU

The Vera Rubin superchip combines the Vera CPU (88 custom Olympus Arm cores, 227 billion transistors) with dual Rubin GPUs (each with 336 billion transistors). Built on TSMC’s 3nm process, it supports up to 288GB HBM4 memory per GPU, delivering massive bandwidth improvements.

Networking and Infrastructure Chips

Additional components include the NVLink 6 Switch, ConnectX-9 SuperNIC, BlueField-4 DPU, and Spectrum-6 Ethernet Switch. These enable extreme co-design, enhancing efficiency for gigascale AI factories and introducing features like rack-scale confidential computing.

The flagship Vera Rubin NVL72 rack-scale system treats the entire rack as a single coherent machine, promising 5x greater inference performance and enhanced training capabilities over Blackwell.

Performance and Efficiency Gains

Rubin targets agentic AI, advanced reasoning, and multi-step models with breakthroughs in power efficiency and scalability. Nvidia claims significant reductions in training time and token generation costs, supported by new technologies like Inference Context Memory Storage for better KV cache sharing.

These advancements position Rubin for trillion-parameter models and always-on AI infrastructures.

Ecosystem and Future Deployments

Major cloud providers and partners, including Microsoft for its Fairwater AI superfactories, are preparing Rubin integrations. Collaborations with Red Hat optimize the stack for enterprise AI.

The platform sets the stage for million-GPU environments and paves the way for Rubin Ultra in 2027.

In summary, Nvidia’s Rubin platform at CES 2026 signals a new era of efficient, scalable AI computing, solidifying its leadership in the accelerating AI industry.